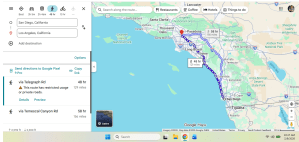

Modern technology has transformed a lot of what we do, and in some ways, it’s very beneficial. For instance, the GPS or satnav in your car or phone can get you wherever you want to be, even if you’re in another country. Need to know where the nearest pharmacy is? Just ask Siri or Alexa.

Modern technology has transformed a lot of what we do, and in some ways, it’s very beneficial. For instance, the GPS or satnav in your car or phone can get you wherever you want to be, even if you’re in another country. Need to know where the nearest pharmacy is? Just ask Siri or Alexa.

AI has become very useful in the professions, too. For instance, it used to be that a researcher had to search through volumes of material to find articles that would be relevant to a study. That process, plus requesting copies of those articles, plus waiting to receive them, could add weeks or more to a research plan. Today, it’s a matter of online searches for materials, downloading copies of articles, and perhaps contacting the authors of those articles. Sometimes those articles are behind paywalls, and some articles are still hard to find. But the overall process is much easier.

While most of us would agree that technology like AI has a lot to offer, there are also serious concerns about it, and an interesting post from Bill Selnes at Mysteries and More From Saskatchewan has prompted me to think about the issues. Bill’s post has to do with an AI chatbot and its role in a young person’s death; in that sense, it’s a set of legal questions. I encourage you to read Bill’s fine article about that issue and how Michael Connelly’s The Proving Ground addresses it.

The use of AI in education raises at least as many questions. On the one hand, why shouldn’t students use, say, ChatGPT to give them examples of math problems, or to show them what a declarative sentence or a simile looks like? On the other, students benefit greatly from doing their own exploration, making their own mistakes as they learn mathematics, and so on. In fact, Sweden has reintroduced paper textbooks and paper/pencil writing; since that time, students’ critical thinking and content knowledge have improved. If students don’t have experience writing their own essays, doing their own lab studies, and so on, they risk not learning those skills. They don’t acquire the nuances of writing – especially writing to varied audiences. Their critical thinking skills arguably aren’t supported, either. There are other drawbacks, too, of heavy reliance on ChatGPT and other AI in the classroom. For instance, AI doesn’t always give correct answers. The very tentative conclusion seems to be that AI has a role to play, but it must be moderated and closely supervised by a well-prepared teacher whose focus is on teaching critical thinking and skills, and more importantly, whose focus is the individuals in the classroom, and their learning.

AI also presents major issues in the world of writing. When I have a particular question for a novel I’m writing (e.g. how long can the police in [place] hold someone without pressing charges?), AI can be very helpful; I get an answer within a second or two. But – and this is an important ‘but’ – it may not be accurate. AI does sometimes get it wrong. Even GPS directions are not always completely accurate. That said, though, assuming one checks accuracy, AI does offer a wealth of that sort of background information for a writer. It’s helpful for grammar questions, too.

There are a lot of serious drawbacks to AI in writing, though. For one thing, if a writer has AI create a story or a novel, that’s not really that particular writer’s work. That raises all sorts of problems with respect to copyright and other matters. For another, AI can miss many nuances and depths of writing that a human author adds to a story. So, the story doesn’t have the layers that readers want. AI-generated work also arguably makes it much harder for human writers to get their work ‘out there.’ And (yes, I know this is repetitive), AI doesn’t always produce accurate, well-polished results. There are other problems, too, with unchecked AI in the world of writing.

There are similar problems to AI-generated music and visual art. It’s the artist’s vision of the piece that makes that piece unique. AI doesn’t necessarily preserve that vision. AI may be able to allow a musician to listen to a particular music passage for inspiration, but that doesn’t mean it substitutes for that musician’s skill at creating new music. Perhaps AI can show a visual artist an example of Joan Miró’s work for inspiration, but it doesn’t bring the artist’s vision to life. And, as is the case with writing, AI raises many copyright and other legal questions in the fields of music and visual art.

The fact is, AI isn’t going away. Acknowledging AI’s benefits, drawbacks, and implications may help us to harness the power of AI technology in ways that support, but don’t supplant, human interaction and effort. Ignoring AI won’t accomplish that. Having conversations about AI, setting boundaries for its use, and taking steps to manage it, will hopefully mean that AI’s existence won’t reduce our humanity. What do you think of AI’s use? What do you think its limits should be? Thank you, Bill, for such important ‘food for thought.’

*NOTE: The title of this post is a line from the Moody Blues’ In the Beginning.

A really thoughtful response to the AI issue, Margot. I have grave reservations about it, from the basic ones about it making people lazy and the death of critical thinking and learning, to the more sinister aspects. It worries me how people will misuse it, whether for political or criminal ends, and how people are already so gullible when it comes to believing anything it serves up. So I think you are right – there need to be very strict boundaries…. 😕

LikeLiked by 1 person

Thank you, KBR. I’ve been thinking about the whole AI issue a lot, as you can imagine. It’s not going away, so I think we have to face and determine just what role it will play. Like you, I do have serious concerns (and you’ve laid them out very neatly!). As you say, people really do need critical thinking and the ability to make meaning from what they experience. AI doesn’t make it easy to teach those skills. And gullibility is dangerous. I don’t know exactly what the future of AI is, but it does need to have boundaries!

LikeLiked by 1 person

Margot: Thanks for the kind words. Every profession, indeed every person, is wrestling with the role of AI in the world. Until reading The Proving Ground I had not realized how AI, through chatbots, could be insidious. We do not know yet if forms of AI will be considered legal persons like corporations. I think it inevitable.

More important I appreciate your reflections on the risks and benefits of AI.

At this time I feel governments around the world are declining to regulate AI. I believe their hesitation is a mistake. Certainly it will be hard to make laws concerning AI but it does not fit well within the current legal system. Ignoring a problem is rarely a solution.

LikeLike

You’re quite right, Bill, that we’re all faced with the challenge of AI and what its role will (and should) be. As you point out very effectively in your last sentence, ignoring the issue will not solve the problem. In fact, it could very likely create bigger problems. It really would behoove us as a society to regulate AI and make some clear decisions about it. It’s difficult, and there will not be an easy consensus. But AI is being developed awfully quickly, and it would be best to deal with its implications as soon as possible.

I appreciate your legal opinion on whether AI will be considered a legal entity. I can see why you think that’s inevitable. We’ll have to see what happens in that instance. In the meantime, I am glad Connelly chose to address the issue in The Proving Ground. And I appreciate your thoughtful post on the book and the topic. It got me thinking, and I am grateful for that.

LikeLike

All the varied books and authors that often you quote in your many articles are they from your own research or AI?

LikeLike

Interesting question, Terry. I usually do my own research. I may look up the spelling of someone’s name or something like that, but for the most part, I do my own stuff. I’ve found that AI doesn’t always give accurate answers.

LikeLike

I use it quite a lot as a reference library and a proof-reader, but I would still worry about it being used too much in schools. For a start, as you point out, it isn’t always right. It doesn’t always understand nuance, but is quite decided in its opinions. So it will advise me to change something, and the change it suggests would totally change the tone or sometimes even the meaning of the sentence. Or it would mean the next sentence no longer flows naturally. I’m an opinionated old so-and-so (as you know!) with many years of writing experience, so I cheerfully ignore it or tell it my word choice is better, or even just that I wouldn’t use a word it wants me to use, but I wonder if kids would be more likely to accept that AI knows best. I think AI-written articles are fairly obvious to anyone who chats to AI – it has a fairly limited range of tones and phrases that it repeats ad nauseam. I can’t imagine reading an entire book written by it – soporific!

LikeLike

Thanks very much for your insights, FictionFan. You outline so clearly the sorts of problems that come with overdependence on AI. The changes that AI suggests certainly don’t always improve writing, do they? And as you show, AI-generated writing is just different to human writing. They really are not the same, and I wouldn’t want to read an entire AI-generated book, either. The passion, if that’s the word, of human writing is just not there, and it makes a difference. ‘Soporific’ is a good word for it!

You make an important point, too, about your own experience as a writer. I think that’s a crucial point. Young people don’t have those skills, which can mean they rely too much on what AI suggests, instead of thinking critically about what they want to say. And therein lies the problem. Young people then never learn to actually master writing themselves.

LikeLiked by 1 person

Many grave concerns, that’s for sure. As a freelance editor, I’m seeing a huge divide between those who are “writing” their books with AI and those who use it only as a research tool.

LikeLike

Thanks very much, Becky, for your insights. I could well imagine that AI-generated books are very different to books that authors actually write themselves. As I’m sure you know, AI can be useful for doing background research. But writing with AI is a different matter altogether. So, yes, there is real concern about AI in writing.

LikeLiked by 1 person

I find the topic of AI disturbing, even though I had not thought of all the issues that you and Bill have addressed in your posts. (And thanks for pointing me to Bill’s post, which I had missed.)

I cannot believe that AI will ever successfully create novels or stories that would have the depth that a human can produce, but I am very worried about the effect of AI and many other facets of technology in relation to education.

LikeLike

AI brings with it a lot of potential problems, Tracy, and you’re by no means the only one who’s worried about its impact. You have a good point, too, that we do have to think about the role AI should play in education. Left unrestricted, it could have serious consequences for school and learning. As for books, I agree with you that AI-generated books don’t have the depth or nuances that books written by humans have.

I’m glad you took the time to read Bill’s post. I think it’s excellent, and it inspired this post..

LikeLike